How Not to Get Ripped Off by AWS - A CDN Cost Reduction Journey

I was thinking about what to write for my next post, and after sharing this story and getting a good response, I decided to write about it.

0. Getting Started

The servers I'm responsible for are the services with the highest user count (16 million..) at my current company.

I can't reveal the exact traffic numbers, but monthly traffic exceeds PB (petabytes).

The joy of having many users was short-lived, as the burden of ever-increasing communication costs (IDC, AWS CF costs, etc.) kept growing.

Therefore, a cost reduction task force was formed, and together with a client developer, we developed various strategies.

In the end, by reducing costs that had been misused for a long time, we cut the total communication costs in half, and I earned the nickname 'Thanos' within the team.

1. You Need to Accurately Understand the Current Situation to Reduce Costs

The most important thing before starting cost reduction is understanding the current state.

Before starting the reduction, understanding the current status of the systems we use is crucial.

1-1. What Type of Traffic Pattern Does Our CDN Have?

We need to analyze the characteristics of the CDN in use. It can be broadly divided into two patterns:

-

Is traffic from files generated by individual users high?

-

Is traffic from file sets that all users download high?

In my case, it was the '2. Is traffic from file sets that all users download high?' style of CDN traffic pattern.

I can't say exactly what files they are, but think of them as files that every user must download once a day.

1-2. How Do You Identify These Patterns?

How can we analyze these usage patterns? You could check through code, but the most accurate way is to look at the CDN provider's reports.

In my case, I use AWS CloudFront, and I could easily identify CDN usage patterns through the basic reports provided by CloudFront.

1-3. Analyze and Utilize CDN Provider Billing Patterns

Traffic billing patterns are mainly divided into two types.

Consider the pros and cons of both patterns carefully and choose the one that fits your product.

- Data Transfer Based Billing

Data transfer based billing is exactly what it sounds like. Whether 100% of users download a 100kb file at 9 AM, or they download it in 25% chunks 4 times, the charged cost is the same.

You're billed based on the actual bytes delivered to users. Most recent cloud providers use a data transfer based billing model.

Since you pay for what users actually use, there are no so-called "unfair charges." However, when your user count grows and actual traffic increases, you end up spending a lot of money.

I assume that because regular consumer internet in foreign countries is also billed based on usage, they followed that model.

- Peak Bandwidth Based Billing

Peak traffic based billing is the opposite of data transfer based billing.

You're billed based on the bandwidth during the time period when the most clients were simultaneously connected and downloading files that month.

Let me give an example. Let's assume there's a file set that every user downloads once a day.

If 100% of users download the file at 9 AM, that month's cost is based on the peak traffic at 9 AM.

But if 25% download at 9, 10, 11, and 12 o'clock respectively, you're billed for 25% bandwidth usage.

With this model, if you strategize well and control the endpoints, you can save a lot of costs.

However, since CDN providers also make less money this way, this model seems to be disappearing nowadays.

2. Let's Develop a Habit of Calculating

This is something I wish all client developers would cultivate. The habit of calculating traffic.

It's not a difficult calculation. Here's how it works with these criteria:

Criteria

-

File size downloaded: 1MB

-

Per GB: $0.040

-

Active Users: 1,000,000

-

Billing method: Data transfer based

(Traffic consumed per user per day) * Number of users

Daily traffic: 1 * 1,000,000 = 10,000,000MB (976.5625GB)

Daily traffic cost: $39

Weekly traffic cost: $273

Monthly traffic cost (4 weeks): $1,092

1 megabyte? How much could that be? But we ended up spending a million won per month on just that file.

You need to develop the habit of making these calculations every time you add a file set.

3. Please Reduce Duplicate Downloads

Continuing from what I discussed in (2), and as mentioned in (1), analyzing traffic usage patterns is important.

As it turned out, from the client developers' perspective, since they couldn't tell if the server had changed the file, they developed it to download and overwrite everything whenever a trigger fired. In reality, this file has a consumption pattern where it changes maybe once a month.

In the end, only $39 should have been consumed when changes occurred, but $1,092 was being consumed.

The most recommended approach for such cases is to have the client check for changes and only update when there are changes.

3-1. Checking for Duplicate Downloads Using File Hash

For those who may not know what a hash is, I recommend reading the article below.

[

Hash Function - Wikipedia From Wikipedia, the free encyclopedia. An example of a hash function that maps names to integers from 0 to 15. There is a collision between "John Smith" and "Sandra Dee". A hash function is any function that can be used to map data of arbitrary size to... en.wikipedia.org

](https://ko.wikipedia.org/wiki/%ED%95%B4%EC%8B%9C_%ED%95%A8%EC%88%98) Anyway, regardless of which algorithm you use (MD5, SHA1, SHA256), if you can extract a unique value of the file, you can check for changes.

Just saying this might not make it clear, so let me give an example.

Let's say there's a Windows application that patches a file at https://cdn.yangs.kr/app/1/1.exe.

Then we generate a SHA256 hash of 1.exe and upload https://cdn.yangs.kr/app/1/1_exe.checksum (64 bytes).

Client Patching Sequence

-

Get the SHA256 hash of the local file.

-

Compare the local file's SHA256 with the string received from remote.

3-1: If the strings are the same, don't download the exe. (Since file integrity was verified through SHA256 file hash, downloading again is redundant)

3-2: If the strings are different, download the exe.

When this methodology is applied, if the file changes once a month, costs go from $1,092 -> $40 ($39 [exe download] + $1 [checksum download cost]).

3-2. Checking for Changes Using HTTP Standard Spec

The above method is the cleanest, but if changes need to be made during upload and a person is simply uploading files via FTP, it would be difficult to implement immediately. However, HTTP standard specs have many considerations built in.

https://developer.mozilla.org/ko/docs/Web/HTTP/Headers/ETag

[

ETag The ETag HTTP response header is an identifier for a specific version of a resource. It lets caches be more efficient and save bandwidth, as a web server does not need to resend a full response if the content has not changed. developer.mozilla.org

](https://developer.mozilla.org/ko/docs/Web/HTTP/Headers/ETag) Almost all commercial CDN providers support the "E-Tag" spec.

In my case, I'm using AWS CloudFront + S3, and the S3 Server supports this spec, making it easy to utilize.

4. Please Use Compression

We have this amazing technology called "compression."

Since most providers bill based on "data transfer," the less data actually delivered to clients, the lower the costs.

Data compression is a technique for efficiently storing data in less storage space, or the actual application of that technique.

[

Data Compression - Wikipedia From Wikipedia, the free encyclopedia. Data compression is a technique for efficiently storing data in less storage space. It converts data into a smaller size... en.wikipedia.org

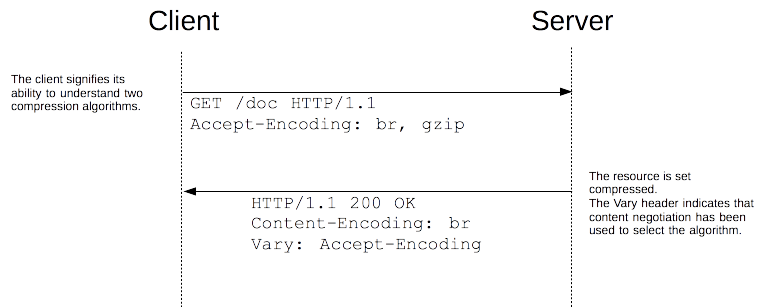

](https://ko.wikipedia.org/wiki/%EB%8D%B0%EC%9D%B4%ED%84%B0_%EC%95%95%EC%B6%95) 4-1. Compression Through HTTP Standard Spec

The people who created the HTTP protocol also tried to reduce data transfer in any way possible.

Korea has unlimited data transfer, but even now in Europe and America, there are ISPs that operate on metered data.

[

Compression in HTTP Compression is an important way to increase the performance of a Web site. For some documents, size reduction of up to 70% lowers the bandwidth capacity needs. Over the years, algorithms have also gotten more efficient... developer.mozilla.org

](https://developer.mozilla.org/ko/docs/Web/HTTP/Compression) As mentioned in the "Data Compression" section, you can compress using various methods.

Source: MDN

'Accept-Encoding' sends which compression methods I can interpret.

'Content-Encoding' tells the client which compression method the server used.

Simply put, when the client (Chrome, IE) says "I can decompress these types," the server looks at the requested type and compresses accordingly.

[

Amazon CloudFront - New Gzip Compression Feature! | Amazon Web Services You can deliver content to users worldwide with low latency using Amazon CloudFront. Starting today, CloudFront supports Gzip compression. You can enable this feature for specific CloudFront distributions... aws.amazon.com

](https://aws.amazon.com/ko/blogs/korea/new-gzip-compression-support-for-amazon-cloudfront/) Almost all CDN providers offer GZIP-related headers, so use them actively.

I spoke in terms of web browsers, but recent HTTP Clients (okhttp3, Apache Http Client) support this spec.

Just check the option and use it to reduce content costs with minimal resources.

5. Reflections After Running a Cost Reduction Project in a Product Team

A wild developer appeared.

Honestly, product teams really dislike these cost reduction projects. It's because they significantly affect the stability of a service that's running fine. At my company, fortunately, we were able to proceed without issues through close collaboration with the client developer.

I'd like to briefly share what I felt while leading this cost reduction effort.

1. Share actual costs and figures with all developers to motivate them.

At first, the client developers weren't very enthusiastic. It was because of what I mentioned above.

I thought about how to persuade them and decided to show visible numbers.

I can't reveal absolute figures on this blog, but,

As explained in the section above, after understanding the current situation, I told them "this much cost reduction is possible per task for total AWS costs."

As a result, I believe we gave the developers good motivation, and I think we received good results in 2020... (I'll explain why I'm trailing off at the end)

2. Cost reduction projects should be done in phases rather than all at once

It would be great if we could dedicate 100% of the product developers' M/M (man-months) to this, but that's not the reality of profit-making companies. In such cases, it's important to have an overall plan and apply it to services in stages.

- Plan A (Jan ~ Mar): Cost optimization for XX feature- Plan A Monitoring/Results Report (2 weeks after launch): Traffic and cost reduction after release- Plan B (Apr ~ May): Cost optimization for XX feature- Plan B Monitoring/Results Report (2 weeks after launch): Traffic and cost reduction after release

Doing it in phases is important not only for developer resources but also for reporting results.

'We reduced costs by this much through cost reduction for Feature A' is the hottest topic at weekly meetings and the most important issue for management. Also, these metrics help estimate how much cost reduction is possible relative to developer resources for similar future tasks.

As a side note, I once wanted to release as quickly as possible and released two features together, which caused a lot of trouble with issues like these.

3. When reporting to upper management, leave about 10% as a buffer.

I think this is more important than it sounds. If cost reduction falls short, you'll be under scrutiny, but if it exceeds expectations, you can walk around with your head held high.

When sharing progress in 'weekly reports', 'monthly reports', etc., have the sense to leave about a 10% buffer from your expected cost reduction.

As a side note, while working with the client developers, we thought 'will we get a fat incentive in 2021?!' but actually, I ended up leaving the company right after completing the main cost reduction work. It's a shame, but I have no regrets since it was a move for a more important choice.

7. In Closing

This cost reduction project started in January 2020 with a small team of 3 people and proceeded through 6 phases.

I left the company before the final phase, which honestly weighed on my mind.

I don't know if those colleagues will ever see this post, but through this article, I want to express my gratitude to the project team members who worked on the cost reduction project with me without showing any signs of hardship, even though I gave them a hard time with the cost reduction work.

Actually, this post was written bit by bit starting from October 2020 and is being published in 2021.

Since I'm also writing from memory, there may be things I've said incorrectly.

If you see any mistakes or conceptual errors, please leave a comment and I'll check and correct each one!