The Day Sentry DDoS'd Us

Getting Started

During a peaceful day at work, I received a message from the network team:

International traffic is spiking. Should we block it?

Since the service I was managing was attacked fairly often, I was about to check the source IP and proceed with blocking.

But the IP looked familiar. It turned out to be our self-hosted Sentry server running in the cloud.

Today I'll share this story.

Let's Look at the Logs

First, Sentry was connected to a deployed React project.

123.123.123.123 GET /bundle/aaaaa.js? "sentry/22.6.0 (https://sentry.io)"

Looking at the logs, requests kept coming in with User Agent "sentry/22.6.0 (https://sentry.io)".

It was a bundled JS file, and since it was quite large, it was consuming a lot of bandwidth.

At first, the development team had trouble figuring out why Sentry kept making requests.

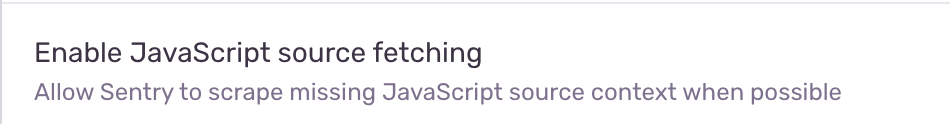

After examining various possibilities, we discovered a very problematic setting.

Wait, Found It!... Oh... There Was Such an Option...

When Sentry captures an error, it requests the JavaScript file for source mapping.

I thought it would sample the JS file downloads, but it was making one request per captured error.

As it happened, there was a deployment the day before that was causing quite a few errors...

Since there was a bandwidth issue, we temporarily resolved it by turning this off and looked for a fundamental solution.

The reason Sentry kept making requests was found not far away.

https://blog.sentry.io/2018/07/17/source-code-fetching/

From Sentry's perspective, since there was no version information, it just downloaded fresh copies every time an error occurred.

Since errors were occurring continuously in the background, it was essentially a self-inflicted DDoS attack.

Wait, But We Upload Source Maps...

We basically upload source maps with every build. So I didn't understand why it was still making requests.

Looking through the build history, I found that source map deployment had failed in Jenkins but was ignored and marked as complete anyway.

After fixing the Jenkins error and re-enabling the option, we confirmed that traffic had decreased.

Summary

-

A recently deployed feature was causing quite a few errors in the background, which started getting captured by Sentry.

-

According to Sentry's mechanism, since there were no source maps, it tried to download them directly.

-

However, there was no sampling - it made a request every time an error occurred, essentially performing a self-inflicted DDoS.

-

Sentry Settings -> Project Settings -> CLIENT SECURITY -> Enable JavaScript source fetching -> off, then confirmed normalization.

-

It turned out that source map uploads had been failing due to infrastructure changes at some point, which we hadn't noticed.

-

After fixing issue (5), everything returned to normal.

I still don't know why the Jenkins configuration was changed to ignore failures, but if Jenkins had been configured correctly, we would have found the issue quickly.

I'm writing this hoping others won't have to go through the same troubleshooting.