If You're Hesitant About Using Claude Code (aka. If You're Considering a Coding Agent)

Introduction

Recently, there's been a surge of interest in coding agents. Among them, Claude Code is arguably the reigning champion. (Of course, as I write this, Google's Antigravity is also emerging, but in my opinion, Claude Code is still number one.)

I too found it burdensome to spend $100 per month on a personal project, but I'd like to share my decision-making process and some insights that might help you make a similar decision.

Subscription Costs Are Really Expensive

In the age of subscriptions, the amount we spend on monthly subscriptions is growing exponentially.

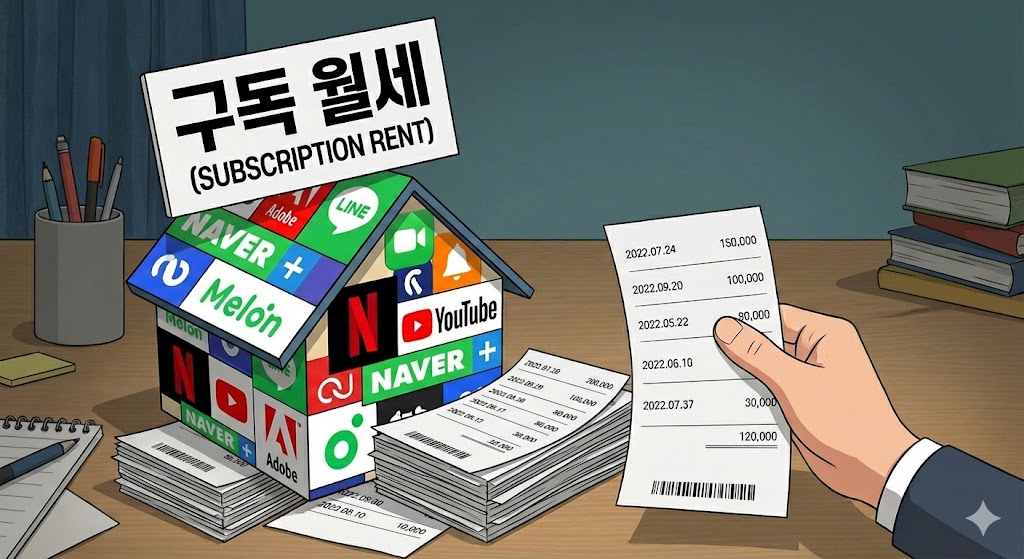

When I tallied up my own expenses, I was paying a whopping 240,000 KRW per month. Even excluding Claude Code, I was spending about 100,000 KRW monthly.

While organizing my subscription costs this time, I decided to cut back on subscriptions I don't use frequently.

(The high exchange rate doesn't help either.)

In this situation, spending 100,000 KRW monthly on a personal project was truly not an easy decision.

I'm going to describe the process of making that decision.

Vague Thoughts About Coding Agents, Then a Key Employee's Resignation

My thoughts on Coding Agents until October 2025

I haven't been deeply involved with coding agents for very long.

One day before Chuseok (Korean Thanksgiving), my favorite developer said they were leaving the company.

The company's project had a long way to go, and losing not just any employee but a key talent was truly devastating.

As a team leader, I sought ways to resolve the dire situation. I got approval from the CTO to hire replacements,

but the feeling of despair honestly remained.

At that point, the leader of the technical planning team at my company suggested, "Have you tried Claude Code?" That's when I started to open my eyes to coding agents.

(I wasn't completely new to them, of course. I had been using Cursor and GitHub Copilot for assistance,

but they often required more effort than they saved, so I kept them at an assistant level rather than using them for vibe coding.)

Even at that point, they were still just assistants to me, so I planned to just participate in a POC as an experience.

Then Chuseok started, and after putting the baby to sleep, I wondered what to study and decided to set up Claude Code and give it a try.

E...Eureka

It really can only be described in one word: "Eureka."

I thought I was adopting the latest development methodologies, but suddenly my approach felt as outdated as financial SI development from 10 years ago.

I organized a "Tech Spec" document with ChatGPT about the business logic I wanted to build,

chose which APIs to use and what structure to design, then had Claude Code read the prompts and request development - and decent output started coming out.

I've been developing for 10 years now, but it felt like I had returned to the passion of my third year. Ideas that were just floating in my head started materializing with minimal resources, and the dopamine rush was incredible.

AGENTS.md - A Compass and Navigation Map for Pioneering New Paths

People Always Expect AI to 'Just Figure It Out' Without Telling It Anything

So AI inevitably loses direction, burns tokens, and people say "This garbage isn't worth the money."

I was the same way at first. While the Eureka moment gave me a dopamine rush, I was still annoyed that it required some manual intervention.

So I searched hard for a better method, and found that my approach itself was wrong.

AI also needs a compass and navigation map. AI doesn't know what kind of code would satisfy me.

Let me give you an example. In my Kotlin + Spring setup, I have a rule not to use ResponseEntity in Controllers.

3. Controllers should not use ResponseEntity.

- Bad example: `fun getMe(): ResponseEntity<UserResources.Response.Me>`

- Good example: `fun getMe(): UserResources.Response.Me`

- Error handling should throw custom exceptions (UnauthorizedException, etc.) and be handled in GlobalExceptionHandler.

When you show worst-case examples like this, the coding agent will reference them and avoid making the same mistakes in future tasks.

So when I work with coding agents and encounter a convention issue, I don't say "fix this."

I write the rule that the AI got wrong in AGENTS.md myself, then say "Check if there are any rules you violated according to @AGENTS.md."

At the time of writing this, I was doing it this way, but recently I've been writing more lint rules.

I write the following in AGENTS.md:

Post-completion TODO

-

Run ./gradlew build to check for build issues

-

Run ./gradlew lint to ensure all lint rules are followed

-

Run ./gradlew test to verify tests pass

"Just Do It"

Now I've given it a compass and map. Does that mean it can just run?

As expected, if you just say "do it," it does things wrong. Now you need to study and research how to properly manage this employee.

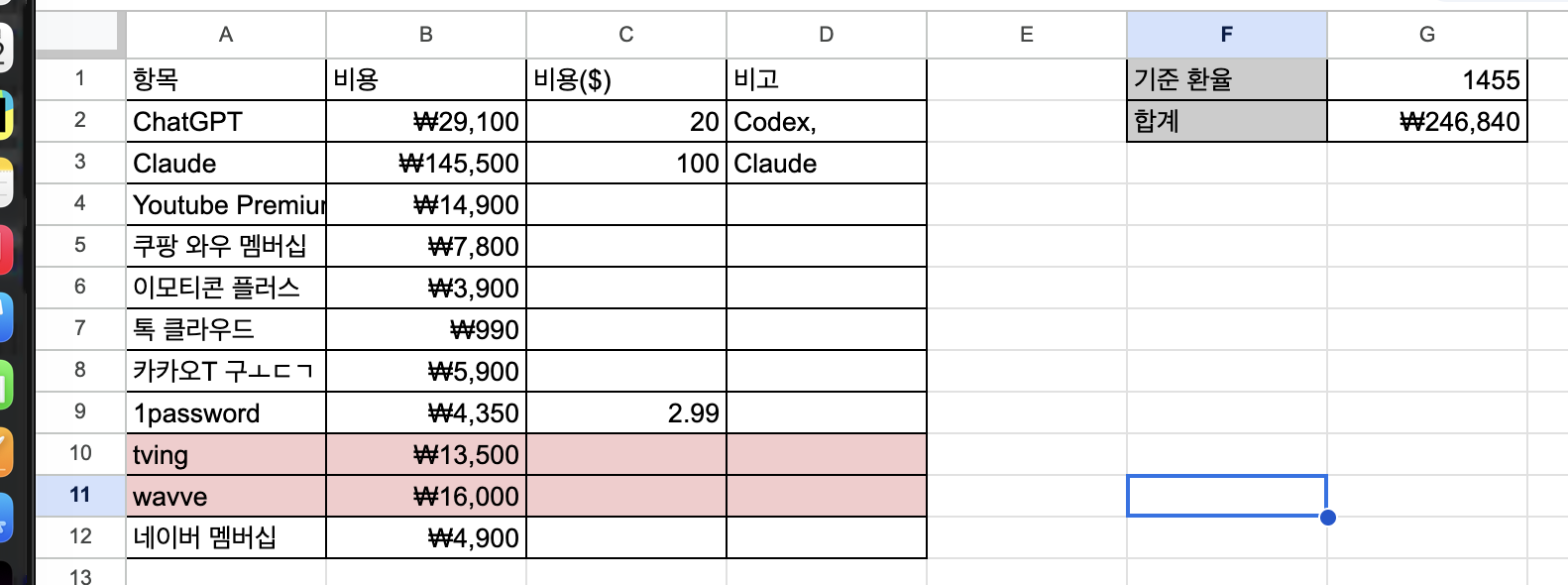

The methodology I use is as follows:

Plan -> Plan Review -> Agent Mode -> Code Review -> Test

1. Define Tech Spec with the Agent in Plan Mode

I want to create a new Manager API (CRUD). There's a parent concept called ParkOwner, and an entity called ParkUser. Only when ParkUser's type is OWNER can they query MANAGERs with the same affiliation. The required response fields are (id, name, created date, modified date, phone number).- List API - Detail API (this one also needs parking lot count) - Update API - Delete API

When you request this, the coding agent starts searching the codebase.

2. Start Q&A based on the work from step 1

-

Is the name field from ParkOwner's name? Or from getUserInfo via KakaoAccountClient?

-> It should be from KakaoAccountClient's getUserInfo, but for this task I should also modify it to save the name during registration.

Once you do this, the AI requests a review of the final change spec. After completing the review, you just say "proceed."

3. Watch the agent code

I know this isn't easy. However, even when well-written in Plan Mode, sometimes it goes off track.

I think it's important to intervene before token burn happens.

4. Code review and testing

AI still makes mistakes, and even with a compass and map, it can't always reach the exact destination.

So review is necessary. When AI completes a task, I do basic code review and testing.

At this point, you'll hear many cases of "AI firing" happening.

(AI firing? That's when you just commit & push whatever the AI codes and tell someone else to test it - a very bad habit.)

I've seen people around me who "AI fire" their code, and it's truly terrible. Someone even pushed code that didn't even run and asked "why isn't this working?" (They hadn't run it locally even once.)

And I always do code review. Even if I can't review line by line, I at least look at what role each class plays and understand it.

The reason is that a friend recently told me this:

"Coding agents are great, but now when errors occur, I don't know where the problem started."

At that moment, it felt like getting hit on the head. Even I couldn't remember the code I had written until then.

After receiving this shock, I decided to at least review most code.

Prompt Like a Leader Providing Direction Rather Than Just Saying "Do It"

As the subtitle says, "implement this feature" or "code it this way" is fine, but you should request it as if asking a team member.

You need to provide context and direction like "the direction is this, so let's abstract it this way" or "I don't think we need to make this part Common."

In my case, I'm developing an app like RayCast, and early on I worked with Claude Code to structure the search functionality in a plugin format.

Even when new features were added later, it showed that it could find its way without losing direction.

(Of course, the relevant content is described in AGENTS.md. But it follows along well even without AGENTS.md.)

$100/month (144,000 KRW) Is Too Burdensome

I too found it very burdensome to spend $100 every month at first.

But something a friend said resonated so much that I've continued paying and enjoying making fun personal products.

"$100 is cheap. To hire one employee, you start at minimum $2,000." "An employee who's on standby 24/7 and does what I tell them to do for $100? Cheap, cheap."

They're right. Even as I write this, my agent is diligently developing.

While producing the products I want. After hearing this, it actually started feeling cheap (is this gaslighting?).

If the cost feels burdensome to you reading this, think of it as hiring an employee and run it every day to get your money's worth.

Conclusion

There's a saying "turn crisis into opportunity." This key employee's resignation was a major crisis for me, but after discovering coding agents, it seems to have turned into an opportunity.

Recently, I've been stressed about running out of ideas. I had been recording products I wanted to make in Notion, and over the past month with Claude Code, I've made everything I wanted. I'm having the happy dilemma of "what should I do next?"

Of course, I'm also worried.

In my estimation, simple coders will be replaced within the next 5 years. I think roles like Code Validator and Code Responsibility might emerge rather than developers.

The wave of change has now begun. Coding agents that started early this year are now, at year's end, raising questions about whether they might replace developers,

and new AIs are pouring out every month and every week. Now we have no choice but to follow this trend.

Haven't tried a coding agent yet? It's not too late. I recommend starting now.